I recently read an article about the way pinterest save data. After some rapid growth, they experimented with a variety of setups (which did not work too well), they devised a simple (relatively speaking) system to save data across an array of MySQL databases. The idea behind this being that you could initially put all the databases on a single server and as demand grows, move these databases out to their own servers.

Part of this approach involved saving data for a specific URL. This involved doing a hash and running a modulus operation on a URL that returns a number between a certain value (e.g. 1 – 4096) to determine where the data would be stored for that URL. So for example, a hash would be ran on the string ‘example.com’ and that would return 3 which would place it on db0003, ‘example.com/1’ would return 2034 which would place it on ‘db2034’ and so forth. The idea around this being that data would be evenly distributed between the databases.

I’ve been thinking about a project involving saving large amounts of data in a distributed fashion and was quite taken by this because of it’s simple approach to sharding. However, how evenly data were distributed was something I wondered about. After some initial poking around, it seemed that crc32 was a good candidate due to it not being particularly resource intensive compared to md5 and sha*.

However, two questions sprang to mind:

* How evenly was distributed between the shards.

* Are there no discrepancies between the hash function

I was pretty sure the answers to the questions would be ‘quite well’, and ‘there are no discrepancies’ respectively but did some tests anyway. After all, the last thing we want is for one implementation to give a different result from another.

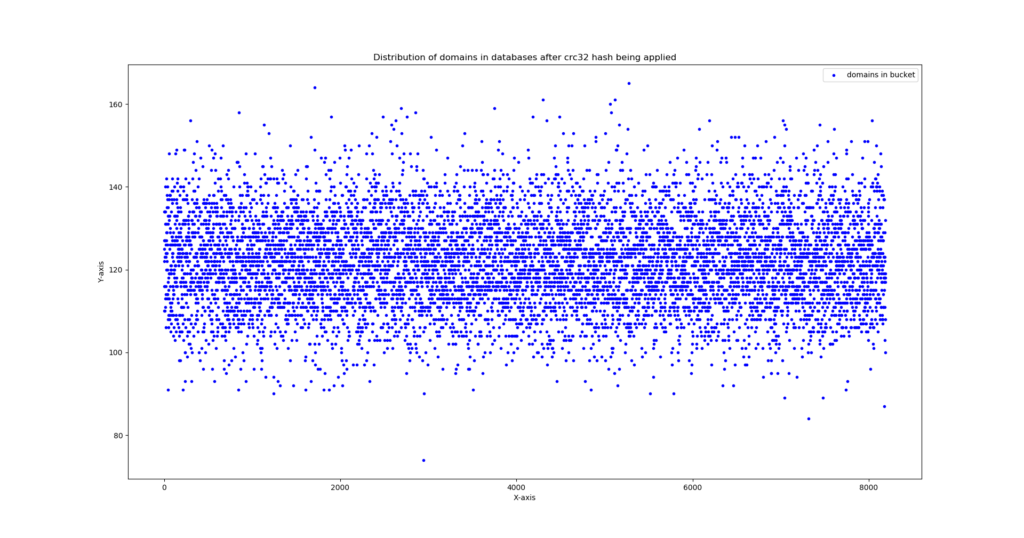

The test strings were ran on the majestic millions top domains and what the code does is create an array of all the shard id’s with each one initialized at 0. Then go through that majestic millions text file line by line and does a hash (and modulus) to get the shard id. Once the shard id is retrieved, iterate the array with the shard id’s by 1. The graph below shows the result of those hashes and shows how many domains each bucket has.

The x axis shows the shard id and the y axis shows the number of records in each shard. Click to embiggen

What we can see from eyeballing the graph it is that data are roughly evenly distributed between the shards. A few outliers here and there but most shards have between 100 and 140 items. Another script which compares the shard result of each implementation demonstrated there were no discrepancies either.

All code can be found here. Any questions, feel free to comment and I’ll get back asap.